AI Driven Products

Media Strategy & Experimentation Tool

An AI-powered platform designed to help manage and plan social media strategies. It serves two main groups: social practitioners who run campaigns and create experiments, and buyers who focus on big-picture goals and measuring performance. The AI features suggest experiments and predict their success, making sure they meet quality standards for reliable results. This platform makes it easier for teams to work together, track their progress, and stay aligned with client goals — all while using smart insights to improve their campaigns.

The Gap Between Campaign Execution and Learning

Marketing teams invest heavily in campaign execution but struggle to extract timely, actionable insights from experiment results — especially when done manually. No existing tool fully addressed the pain point of generating, tracking, and scaling campaign insights for continuous performance improvement across platforms like Meta.

Scaling Insights Without Scaling Effort

Experimentation setup is manual and time-consuming. Insight generation is delayed and inconsistent. Learnings are not reusable across campaigns or teams. How might we enable marketers to run faster experiments, extract actionable insights, and scale impact without increasing effort or dependency on analysts?

- Manual, fragmented experiment setup across tools and teams

- Insight generation too slow to influence live campaigns

- Learnings locked in individual campaigns — not shared or searchable

Building from Scratch With No Competitor Reference

There were no direct competitor tools in the market. We built vision-first, collaborating with product owners and data teams running initial POCs. Introducing customer journey frameworks shifted conversations from features to user intent — and revealed two very different audiences needing the same platform: media buyers and campaign practitioners.

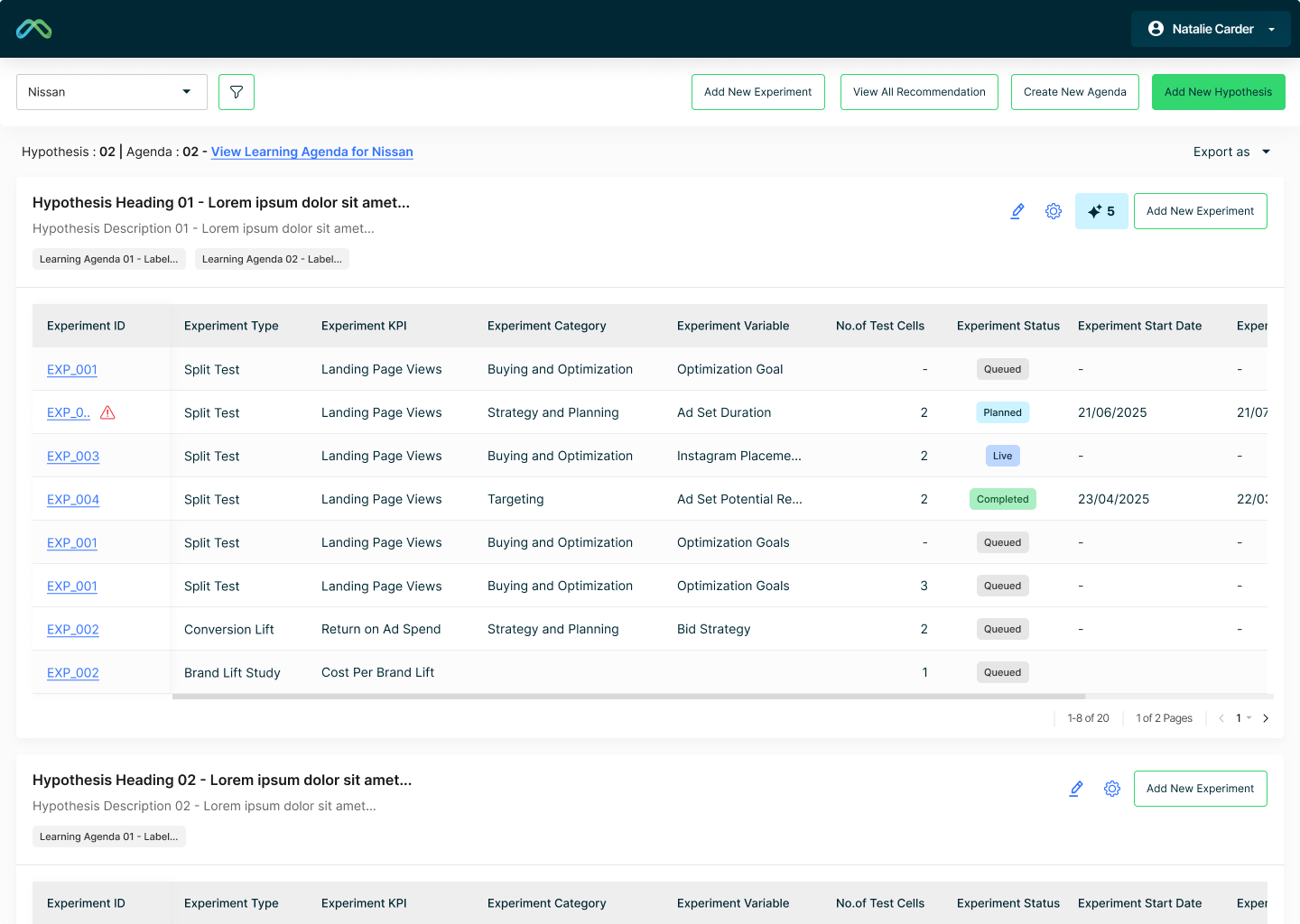

A Modular Architecture for Learning Agendas, Hypotheses, and Experiments

The platform is structured as a modular system: Learning Agendas own overarching business goals. Hypotheses branch from agendas. Experiments are tied to hypotheses. AI features suggest experiments, predict success using statistical models, flag conflicts, and surface actionable recommendations — reducing analyst dependency without removing analyst control.

Two Layers for Two Audiences — Buyers and Practitioners

The interface operates at two layers simultaneously. Media buyers get a high-level view of goals and outcomes. Campaign practitioners get detailed experiment controls. AI suggestions are surfaced as recommendations, not mandates — keeping user agency central throughout.

Usability Testing Validated the Vision

A simplified layout led to better comprehension across both user groups. Users independently asked for intelligent insights — directly confirming the AI-forward product direction. Despite inherent complexity, both media buyers and practitioners recognised the platform's long-term value.

Scoped for Phase 2 — And What We'd Do Differently

The platform is now scoped for Phase 2 with improved data visualisation and deeper AI intelligence. This project reinforced that data-heavy tools need mental model alignment before feature depth — and that validating earlier would have prevented late-stage pivots.

- Mental model alignment matters more than feature completeness

- No reference = freedom, but also the responsibility to validate your own bar

- Earlier testing would have prevented late-stage pivots

Ask About This Project

Ask about this project